- Home

- Topic Hub: Britain After Brexit

Exiting the EU and Britain After Brexit

On 23 June 2016 the United Kingdom voted to leave the European Union, and on 31 January 2020 the UK became the first (and so far only) member state to have left the EU. Brexit became a reality. In the period between Prime Minister David Cameron announcing the referendum, the referendum and then the subsequent withdrawal negotiations and transition period, the National Institute of Economic and Social Research examined the economic and social implications of this momentous decision.

Articles from the National Institute Economic Review 252

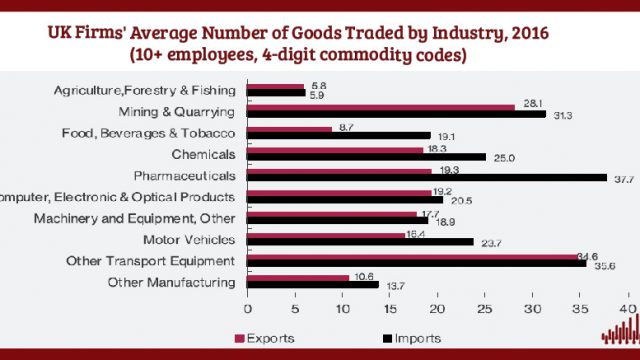

Brexit and impact routes through global value chains

30 Apr 2020

National Institute Economic Review

Articles from the National Institute Economic Review 250

Maintaining stable macroeconomic conditions

30 Oct 2019

National Institute Economic Review

Articles from the National Institute Economic Review 248

Prospects for the UK economy: Forecast Summary

25 Apr 2019

National Institute Economic Review

Introduction: Challenges for Immigration Policy in Post-Brexit Britain

25 Apr 2019

National Institute Economic Review

Immigration Policy from Post-War to Post-Brexit: How New Immigration Policy can Reconcile Public Attitudes and Employer Preferences

25 Apr 2019

National Institute Economic Review

Low-Skilled Employment in a New Immigration Regime: Challenges and Opportunities for Business Transitions

25 Apr 2019

National Institute Economic Review

Is Employer Sponsorship a Good Way to Manage Labour Migration? Implications for Post-Brexit Migration Policies

25 Apr 2019

National Institute Economic Review

Youth Mobility Scheme: The Panacea for Ending Free Movement?

25 Apr 2019

National Institute Economic Review

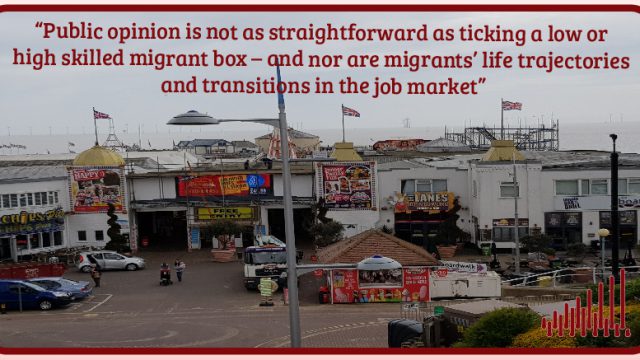

‘High-Skilled Good, Low-Skilled Bad?’ British, Polish and Romanian Attitudes Towards Low-skilled EU Migration

25 Apr 2019

National Institute Economic Review

Articles from the National Institute Economic Review 247

Commentary: Breaking the Brexit impasse: achieving a fair, legitimate and democratic outcome

06 Feb 2019

National Institute Economic Review

Articles from the National Institute Economic Review 244

Articles from the National Institute Economic Review 242

Commentary: Monetary and fiscal policy normalisation as Brexit is negotiated

01 Nov 2017

National Institute Economic Review

Value added from trade for key business and financial service industries: initial estimates

01 Nov 2017

National Institute Economic Review

International trade and UK de-industrialisation

01 Nov 2017

National Institute Economic Review

Articles from the National Institute Economic Review 240

Commentary: The Economic Landscape of the UK

08 May 2017

National Institute Economic Review

Articles from the National Institute Economic Review 239

Economic Policy and Surveillance in Europe: Introduction

01 Feb 2017

National Institute Economic Review

Articles from the National Institute Economic Review 238

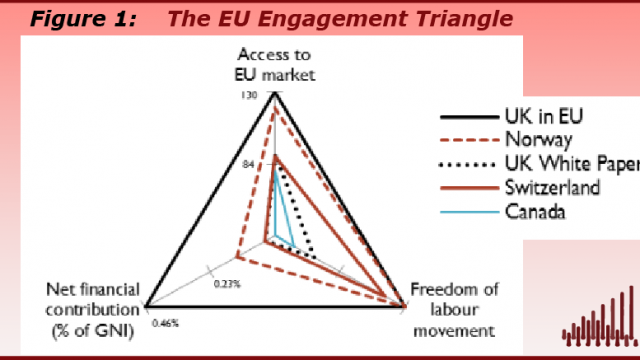

Negotiating the UK’s post-Brexit trade arrangements

02 Nov 2016

National Institute Economic Review

Assessing the impact of trade agreements on trade

02 Nov 2016

National Institute Economic Review

Commentary: Fiscal policy after the Referendum

28 Oct 2016

National Institute Economic Review

Articles from the National Institute Economic Review 237

Commentary: The Referendum Blues: shocking the system

03 Aug 2016

National Institute Economic Review

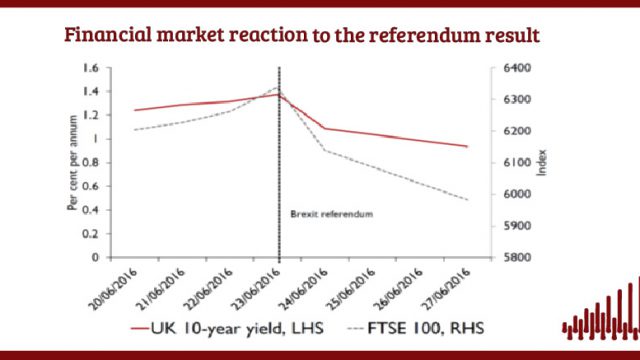

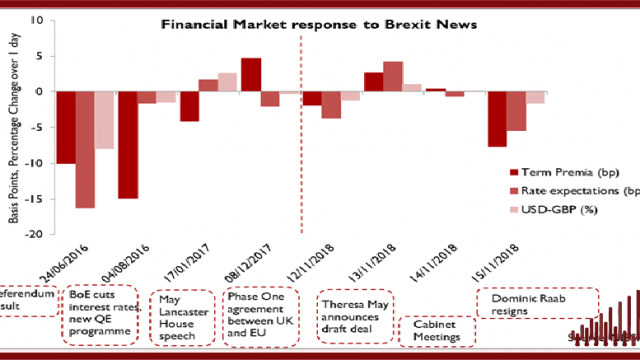

Immediate financial market movements post-Referendum

28 Jul 2016

UK Economic Outlook Box Analysis

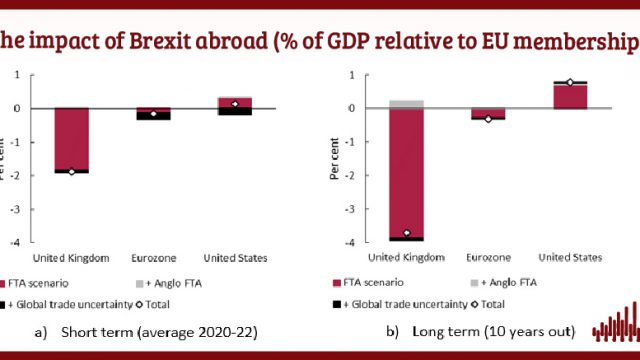

The UK’s Decision to Leave the EU: the Impact on European Economies

28 Jul 2016

Global Economic Outlook Box Analysis

Articles from the National Institute Economic Review 236

Commentary – The economic consequences of leaving the EU

10 May 2016

National Institute Economic Review

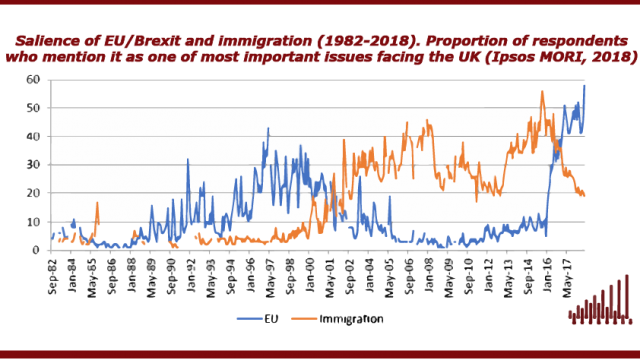

Immigration, free movement and the EU referendum

10 May 2016

National Institute Economic Review

Free movement of services, migration and leaving the EU

10 May 2016

National Institute Economic Review

EU membership, financial services and stability

10 May 2016

National Institute Economic Review

The short-term economic impact of leaving the EU

10 May 2016

National Institute Economic Review

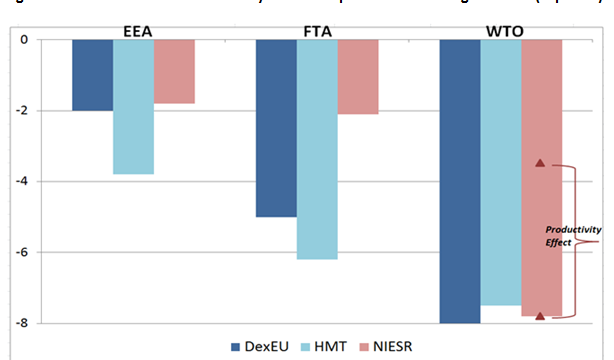

The long-term economic impact of leaving the EU

10 May 2016

National Institute Economic Review

Research Papers

The Northern Ireland Protocol – Lost Opportunities for Northern Ireland?

11 Nov 2022

UK Economic Outlook Box Analysis

The Effects of Covid-19 and Brexit on Firms’ Trading Decisions

07 May 2021

UK Economic Outlook Box Analysis

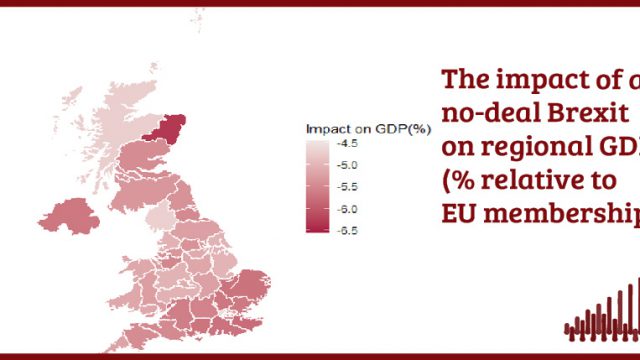

NIESR Briefing: Industry and Regional Effects of a No-Deal Brexit

12 Sep 2019

Topical Briefing

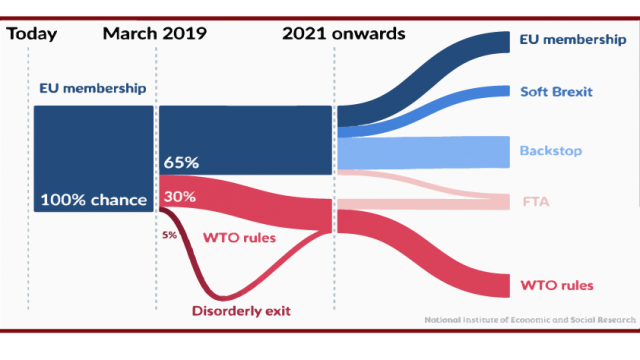

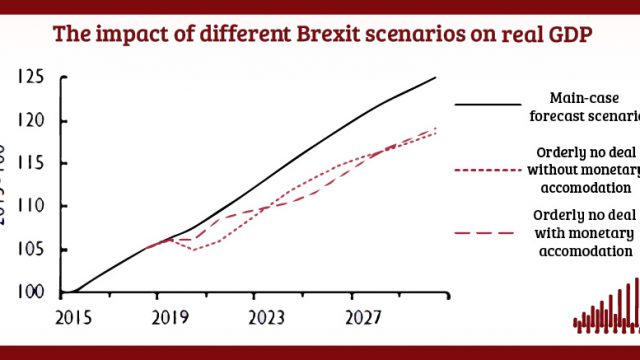

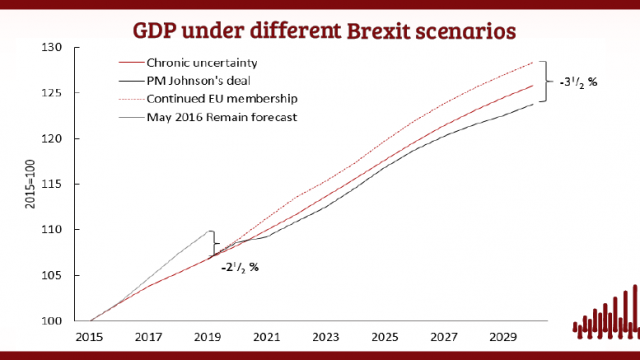

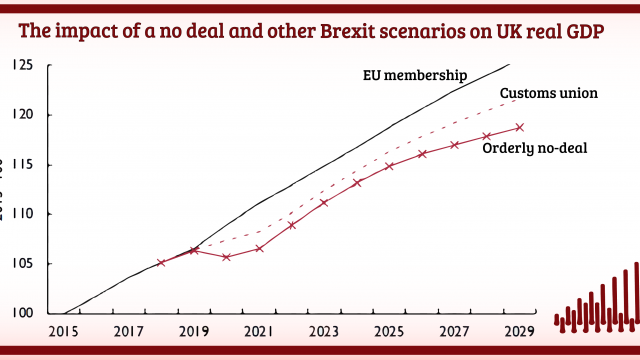

Brexit assumptions and alternative scenarios

29 Aug 2019

UK Economic Outlook Box Analysis

NIESR Briefing: Overview of evidence on UK public attitudes to immigration

19 Aug 2019

Topical Briefing

NIESR Briefing: Overview of evidence on economic impacts of EU immigration

19 Aug 2019

Topical Briefing

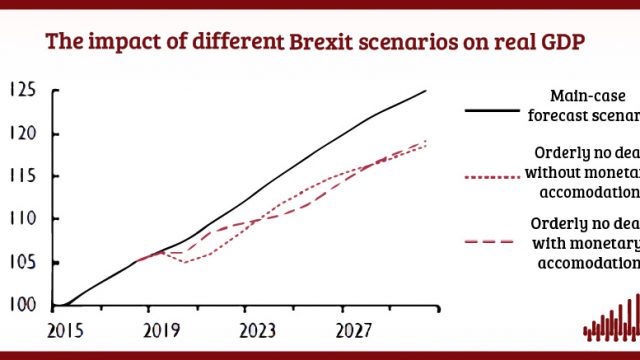

Update: Modelling the Short- and Long-Run Impact of Brexit

29 May 2019

NiGEM Observations

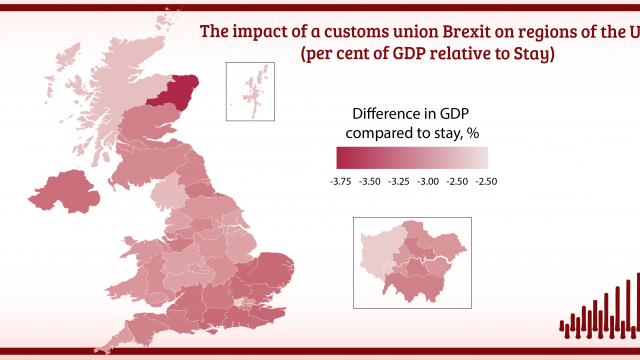

The economic impact on the United Kingdom of a customs union deal with the European Union

08 May 2019

Research Report

Post-Brexit Immigration Policy: Reconciling Public Perceptions with Economic Evidence

11 Oct 2018

Research Report

The OBR’S Approach to Forecasting the Impact of Exiting the European Union – A Submission to the Treasury Committee of the UK Parliament

01 Feb 2018

Policy Papers

Facing the Future: Tackling post-Brexit Labour and Skills Shortages

19 Jun 2017

Policy Papers

The Economic Impact of Brexit-induced Reductions in Migration

07 Dec 2016

Research Report

Employers’ responses to Brexit: The perspective of employers in low skilled sectors

11 Aug 2016

Research Report

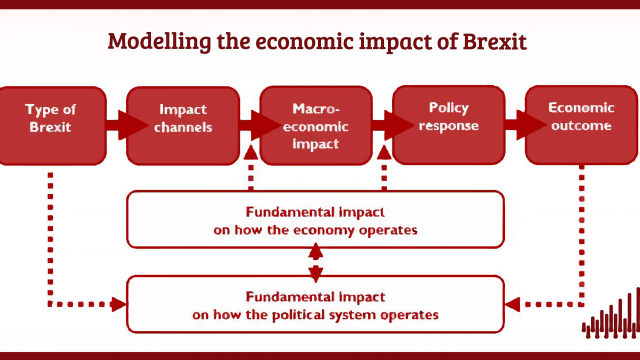

Modelling the long-run economic impact of leaving the European Union

20 Jun 2016

Discussion Papers

Modelling events: The short-term economic impact of leaving the EU

20 Jun 2016

Discussion Papers

The EU Referendum and Fiscal Impact On Low Income Households

09 Jun 2016

Research Report

The Impact of Possible Migration Scenarios after ‘Brexit’ on the State Pension System

02 Jun 2016

Research Report

The long-term macroeconomic effects of lower migration to the UK

24 May 2016

Discussion Papers

The impact of free movement on the labour market: case studies of hospitality, food processing and construction

27 Apr 2016

Research Report

The Long-Term Economic Impact of Reducing Migration in the UK

05 Aug 2014

National Institute Economic Review

The economic implications for the United Kingdom of leaving the European Union

05 Nov 2013

National Institute Economic Review

Migration and productivity: employers’ practices, public attitudes and statistical evidence

05 Nov 2013

Research Report

Examining the relationship between immigration and unemployment using National Insurance Number registration data

09 Jan 2012

Discussion Papers

Our Blog Posts

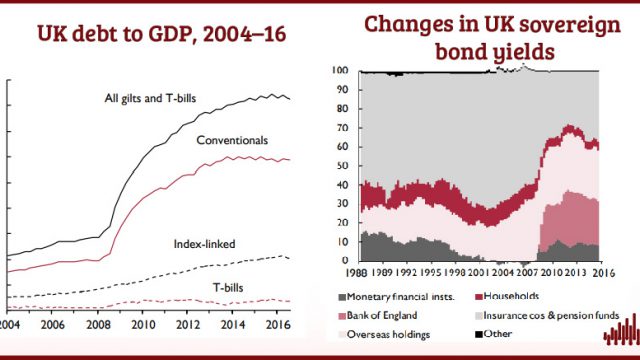

Bremia: A study of the impact of Brexit on bond prices

Sathya Mellina

Corrado Macchiarelli

12 Oct 2020

4 min read

-640x360.jpg)

Global value chains after COVID-19 and BREXIT: Is it the end of the world as we know it?

Ana Rincon-Aznar

21 Apr 2020

5 min read

We forgot about immigration – will this turn out to be a grave mistake?

Johnny Runge

09 Dec 2019

4 min read

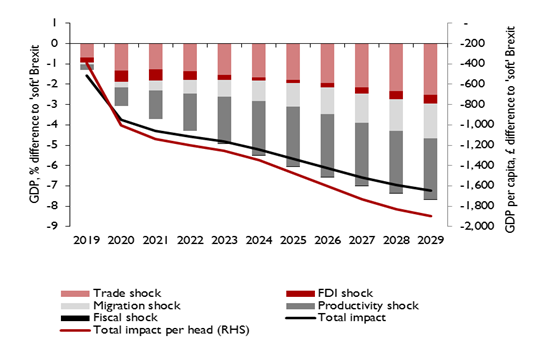

Why we stand by our assessment of a £70bn Brexit impact

Arno Hantzsche

Phil Thornton

Garry Young

14 Nov 2019

5 min read

Hard Choices: the Political Economy of Trade Policy after Brexit

David Vines

Paul Gretton

01 Nov 2019

5 min read

What would lead us to revise our Brexit impact estimates? It’s the politics, stupid …

Arno Hantzsche

25 Sep 2019

6 min read

Freeports, like free lunches, might come with strings attached

Marta Paczos

09 Aug 2019

3 min read

Nothing has changed: No deal does not look to be a good deal

Arno Hantzsche

29 May 2019

6 min read

EU migration after Brexit: High-skilled good, low-skilled bad?

Alexandra Bulat

25 Apr 2019

5 min read

Menu of post-Brexit visas unpalatable to hospitality employers

Heather Rolfe

08 Mar 2019

6 min read

A vague, much repackaged proposal of dubious practical use – the Immigration White paper is here at last

Heather Rolfe

19 Dec 2018

7 min read

The UK is losing its appeal to EU migrants and the new restrictions haven’t even been announced

Heather Rolfe

29 Nov 2018

5 min read

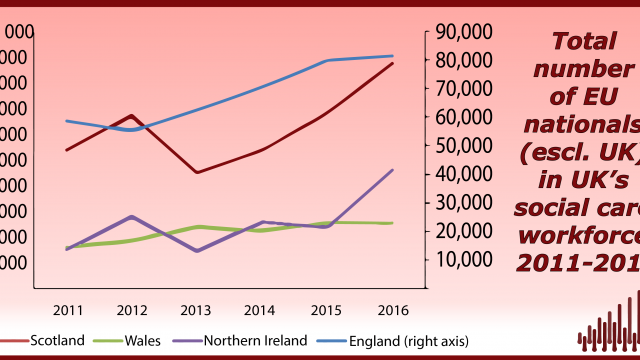

Fewer migrants from the EU also means fewer nurses and doctors

David Nguyen

06 Nov 2018

5 min read

People’s perceptions of EU immigration: it’s the economy, stupid!

Johnny Runge

10 Oct 2018

4 min read

Post-Brexit immigration policy cannot ignore employers’ needs

Heather Rolfe

18 Sep 2018

5 min read

How much would a ‘White Paper Brexit’ cost the UK economy?

Arno Hantzsche

Amit Kara

Cyrille Lenoel

Rebecca Piggott

01 Aug 2018

8 min read

The impact of leaving the European Union is clear: what are we going to do about it?

Jagjit S. Chadha

06 Feb 2018

4 min read

Events

The Economic Impact of Brexit

Beyond Brexit: a programme for UK reform

Joint Round Table: How will the proposed new immigration policies affect food and drink manufacturing?

NIESR Embassies Briefing – The economic outlook beyond the parliamentary Brexit impasse

Jobs: Causes and Consequences of Brexit

Joint Round Table – How will the proposed new immigration policies affect the hospitality sector?

Brexit Countdown: Scenarios and Consequences

NIESR Embassies’ Briefing – The economic outlook in the last quarterly forecast before Brexit

National Institute Economic Review Press Conference – The economic outlook in the last Budget five months before Brexit

Post-Brexit Immigration Policy: Reconciling Public Perceptions with Economic Evidence

NIESR Embassies’ Briefing – Brexit: the trade-offs ahead

Brexit and Trade Choices in Europe and Beyond

National Institute Economic Review February 2018 Press Conference – ‘Brexit: the Trade-offs Ahead’

NIESR and ESCoE Workshop on: ‘Brexit Up Close: Regional and Sectoral Impacts’

Embassies’ Briefing – Prospects for increasing divergence in growth between the UK and the rest of the world as Brexit is negotiated

NIESR’s latest research: ‘Financial Services after Brexit – What next?’

Brexit following Article 50: Implications for the economy, trade and the negotiation process

UK After Brexit

NIESR/HSBC Seminar Series: Mapping the route to Brexit success

Immigration policies for hospitality in a post-Brexit Britain

Immigration policies for food and drink in a post-Brexit Britain

Immigration policies for food and drink in a post-Brexit Britain

Immigration policies for construction in a post-Brexit Britain

Breakfast briefing – Brexit and immigration

Briefing: Economics of Brexit

NIESR’s latest research – The EU Referendum and Fiscal Impact On Low Income Households

NIESR Modellers Conference – The economic consequences of leaving the EU

NIESR’s latest research – Immigration policy after the EU Referendum: economic impacts

News

Our selection of op-eds, videos and podcasts highlighting the issues facing the UK after the EU referendum.

The Independent, 8th December 2019

“With days to go until the most important election for our economy in living memory, voters will be going to the polling stations with blindfolds on. Fiscal policy — the decisions on taxation and public spending that determine how much the government borrows – is in disarray. The government had already shifted the date of the budget from spring to autumn and now Boris Johnson has promised a “Brexit budget” in February if the Conservatives stay in power. Government departments are working with a one-year spending plan, rather than the three-year version that was promised. Because they can’t make long-term plans, they will struggle to deliver the improvements in services such as health, education and policing that voters are demanding” Read more:

- “With just days until the election, voters have been left in the dark on public spending – parties should be ashamed” by Jagjit Chadha

Prospect, 4th December 2019

“The UK minimum wage has been a great success story since its introduction in 1999. Twenty years on, it is at risk of becoming overly politicised in a growing arms race between the two main parties, both eager to claim the credit for boosting the earnings of millions of low-paid workers across Britain….” Read more:

- “The precarious success of the national minimum wage” by Johnny Runge

LSE blog, 6th December 2019

“The fiscal framework adopted in 2010 built on the success of the experience with monetary policy. The basic mechanism, which was replicated to a great degree in the fiscal case, is that a macroeconomic target that suits society is pursued transparently with the support of independent forecasts of whether the target will be achieved. The target and instrument are bound together by a rule that explains how the instrument will respond to the state of the economy. The advantage of rules-based policies is that other participants in the economy can formulate their plans in a manner consistent with the target and if the policy-maker is going to miss the target, there is scope to explain why and how the economy will get back on track. By binding people into a common path of adjustment, it simply becomes easier to meet the target, which should be exactly what society wants anyway. While monetary policy has more or less been bound by such a framework, our fiscal policy is more or less in disarray.” Read more:

The Independent, 17th November 2019

“As the director of an economic think tank based just metres from parliament, I am aware that I can become overly immersed in the statistics and theories that underpin my analysis of Brexit. The same thing was on my mind when I stepped out of the offices of the National Institute of Economic and Social Research last Friday afternoon to catch the last rays of the sun as they caught the Baroque roof of St John’s church on Smith Square…” Read more:

- “I deal with the economics of Brexit every day, but it took this to remind me how deeply personal it is” by Jagjit Chadha

Videos

Anti-immigration sentiments played an important role in the UK’s vote to leave the EU. This video was produced as part of a project on aimed at understanding how people process evidence about the impact of immigration and was shown at 12 focus groups in Kent in April 2018. The aim of the research is to identify more effective forms of communication that enable people to consider evidence in formulating their opinions on immigration. The findings will be published in Autumn 2018.

This conference was designed to coincide with the UK triggering Article 50 and starting its process to withdraw from the EU. Our aim was to look in greater detail and some of the critical issues that will follow. These include what UK Free Trade Agreements might contain and what will be the negotiating priorities, the economic consequences of no longer being within the legal boundary of the European Court of Justice and what the industrial policy should look like. We closed with a panel session on how economists can most effectively communicate with the public over this period.

Watch Dr. Angus Armstrong the Head of Macroeconomics at The National Institute for Economic and Social Research (NIESR) explain Institute and Faculty of Actuaries (IFoA) commissioned research on immigration and the State Pension with regards the EU Referendum outcome.

The Missing Debate in the IN/OUT Referendum is the difference between a Single Market and the suggested alternative a Free Trade Agreement. It turns out this matters for the people of the UK – a lot. A Free Trade Agreement doesn’t fully cover services – and services account for 8 out of every 10 jobs in the UK.

Podcasts

You can subscribe to our podcasts on SoundCloud and on iTunes